Seeing the Full Picture: Dr. WonSook Lee Unites Augmented Reality and Art

How one University of Ottawa professor is using medical imaging and augmented reality to teach languages.

by Arianna Paquette

[Photo © WonSook Lee]

“Okay, just keep your back straight, speak clearly and remember that you have to press hard or else the ultrasound won’t pick anything up,” says WonSook Lee, as she flits around her University of Ottawa lab, her purple boots clacking quickly against the tile floor. She’s adjusting screens, nodding her head, tucking her long black hair behind her ears and flashing an ever-present smile.

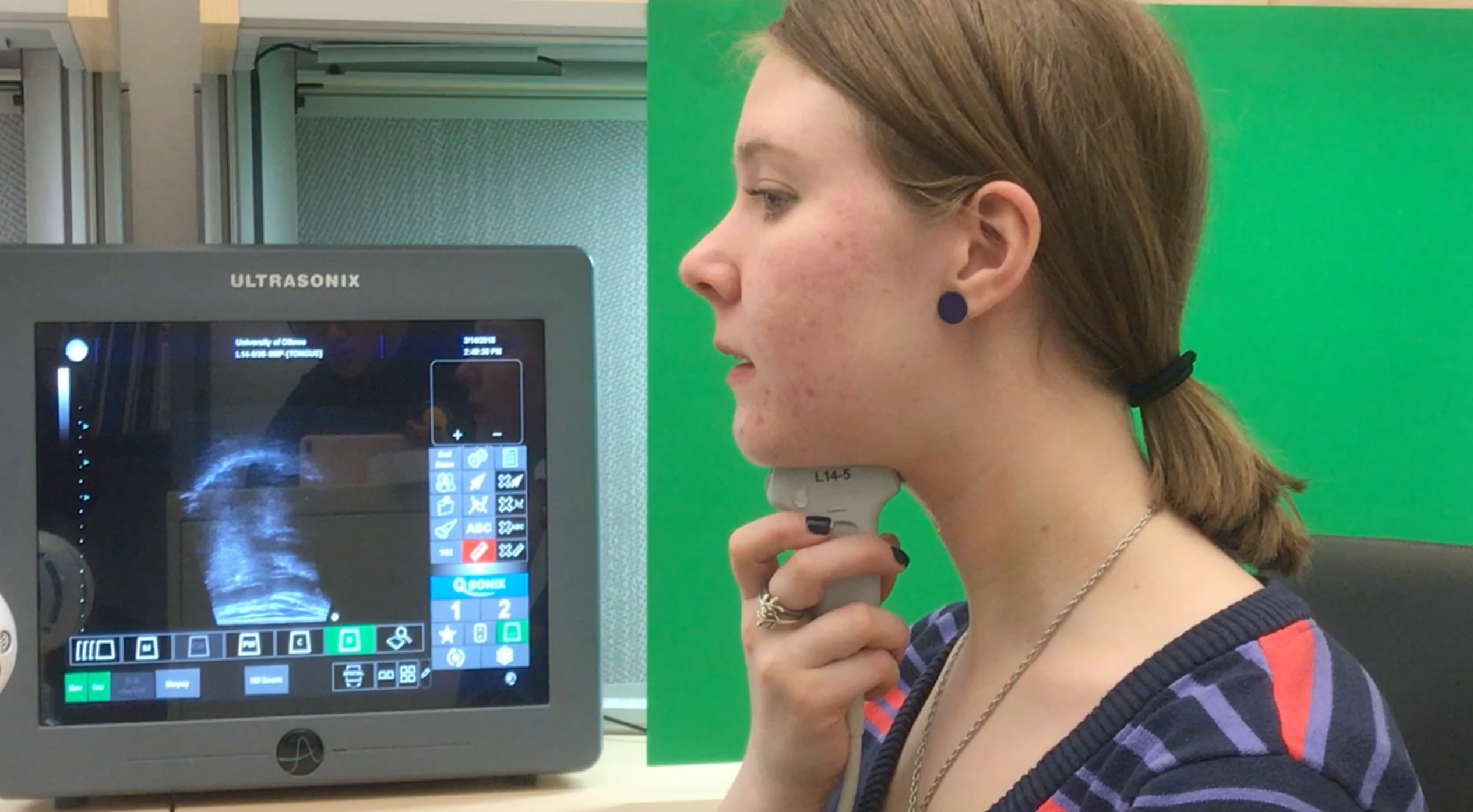

The white and tan lab, nestled in the heart of Ottawa U’s engineering department, isn’t much bigger than an office. But it’s carefully packed with a variety of objects: C++ programming textbooks, a desktop computer, three enormous bottles of ultrasound lubricant, and a small, desktop ultrasound machine that’s currently looking at the inside of my mouth, charting my tongue’s movements as I speak.

Lee’s research interests include augmented reality and artificial intelligence, and she wants to bring art and technology together in her work. She is examining how medical science imaging can be used in non-medical applications, like speech therapy.

Here, with an ultrasound pressed against the underside of my chin, Lee’s two passions, art and science, spring to life on the grainy black and white ultrasound screen.

As I speak, a video of my tongue appears on the screen, capturing the subtle movements of my tongue as I speak. The videos are intended to show non-native speakers how to move their tongue in order to produce the right sounds and syllables.

Lee brings a unique perspective to technology: her main focuses are on humans and art, and the technological applications of both. Her glasses are quickly taped together at the bridge, coloured in with black marker. “I broke them a while ago, and I just didn’t have time to get a backup with me.” Lee explains, her English lightly accented with Korean.

Dr. WonSook Lee (centre) and her team, left to right: Austin W., Hamed Mozaffari, Nan Wang and Shenyong G.

Understanding People Through Visualization

Spurred on by her interests in humans and visualization, Lee wants to look for unconventional solutions to learning languages or conquering a fear of public speaking, by combining science and art.

Lee is native to South Korea, from Cheongju, a city about two hours away from the capital city of Seoul. She was interested in technology from a young age, and always found herself fascinated by the unique differences between people.

“Some people like trees, so they do research for trees. But for me, I like people: their expressions, their emotions, their faces.”

Her interests in film and television animation, coupled with her fascination with people, led her to a computer science degree. She began her education at the Pohang University of Science and Technology (POSTECH), in Pohang, about 3 hours away from South Korea’s capital city of Seoul. Her fluctuating interests and unique research opportunities would eventually lead her to Europe and North America.

She worked as a teaching assistant and computer science researcher, but also spent a lot of her time watching computer-animated movies. She received her Bachelors of Science in 1991, then her Masters in 1993.

But Lee’s interests had shifted, and she wanted to focus on the medical applications of computer animation and 3-D modelling. This new interest brought her to the National University of Singapore, where she focused on creating 3-D reconstructions of catheters and other medical equipment by using 3-D models.

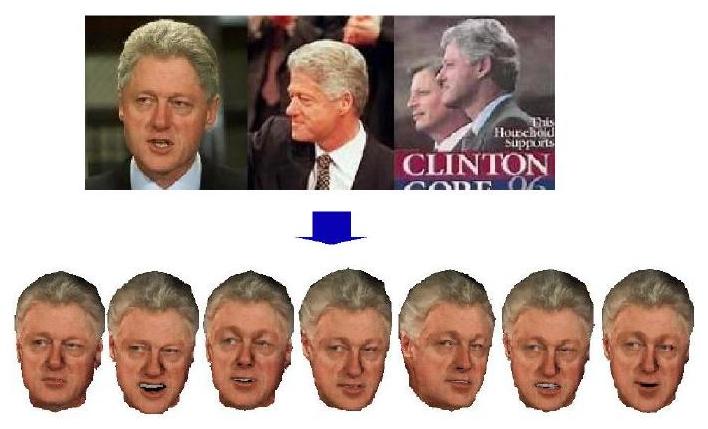

A facial clone of former US president Bill Clinton.

Photo by © WonSook Lee.

Learning through film

She then went to the University of Geneva for her PhD, to finally work on her passion: animating people. She took real people and recreated their features on a computer to create their virtual human models, or virtual clones of the real people. She remembers how computer animation was becoming more common, but the animation style could be hit or miss.

The Final Fantasy movie in particular stands out amongst Lee’s film memories, and taught her an important lesson about animating people. Released in 2001, it was one of the first computer animated films, with real actors voicing completely computer animated characters. “People want animation to look different than real people, so it doesn’t confuse them,” Lee explains. “No matter how perfect computer animation is, it can’t replace real people.”

She says that movies like How to Train Your Dragon and Shrek are good examples of computer animation, because the characters look realistic enough, but not exactly like real people. Finally, Lee decided to come to Ottawa after received an offer to teach computer science at the University of Ottawa.

She explains that her travels helped to shape her perspective and approach to work. “I’m Asian, but I also spent a lot of time in Europe doing my Ph.D, and now I live in North America,” Lee muses. “I feel like I don’t belong anywhere, but I belong everywhere!”

The trailer for 2001’s Final Fantasy: The Spirits Within, based on the video game series.

The Diverse Applications of Augmented Reality

Her latest research goes back to her earlier interests, with improved tools: augmented reality and its use in medical imaging, for more precise and accurate surgery. Where virtual reality is creating an immersive and completely computer-generated world, augmented reality superimposes computer-generated images in the real world, which is seen through virtual reality screens or goggles. A recent example of augmented reality would be the mobile app Pokémon Go, which became a sensation after it was introduced in 2015.

Augmented reality is increasingly being used in medicine, and has a variety of uses. From locating veins more easily, to videos that explain the effects of medications, and even rehabilitation, augmented reality is helping doctors and medical professionals better understand and look inside the body.

Lee explains says that augmented reality can help bridge the disconnect between CT, X-rays or MRI scans, and your body. “With AR, I can add the scan image on top of you, then I’ll know where I need to go,” Lee explains. “It increases the amount of information and cuts down on the time we have to spend on you.”

A video of demonstrating AccuVein, a device that lights up the veins, making them easier for nurses to locate. It is one of many new application of augmented reality in medical imaging.

A new use for an old technology

But Lee is taking a common augmented reality technology, the ultrasound machine, and using it in non-medical applications, like teaching new languages and speech therapy.

“Yelling at people to move their tongue a certain way is very difficult to understand,” Lee says. “But when we visualize the native speaker’s tongue, it gives a better way of referencing, seeing and imitating.”

Hamed Mozaffari, a PhD. Student who is spearheading the project, has a script of words and syllables that are difficult for non-English speakers to say, such as “sleet,” “career,” and “sch.”

He hopes that the study will expand the idea of using medical imaging for non-medical purposes. “We want to expand on virtual and augmented reality, and eventually apply this method for studying different organs in the human body.”

Imperfect Technology

But this study is not without its setbacks. Although Lee notes that although there has been “dramatic progress” related to increased portability and public interest in augmented reality, through apps and increased use of AR by news outlets, there are still some technical and social issues to keep in mind.

Computer vision and recognition issues are some of the biggest problems when dealing with augmented reality. In order to use augmented reality, a computer must understand the real world. Understanding the real world takes more effort than virtual reality, which replaces the real world.

“Computers read things through numbers, but in augmented reality, we need to understand the real world because we need to integrate information.”

Hamed echoes Lee’s frustrations with the limits of current technology. “The biggest challenges are always technological: low-quality ultrasound images, long post-processing times, and synchronizing the data between the ultrasound and the speaker,” Hamed explains. “Sometimes it feels like it takes forever,” he adds with a chuckle.

Another of Lee’s students, Austin Wen, appreciates her unique, human-centered approach to technology. “She inspires us to focus not only on the spatial information in images, but also by using our own experiences to think about things.” He adds that Lee is great at organizing and sharing her knowledge with all her students, and is never reluctant to take action.

The good and the bad

In the end Lee says, however good the technology becomes, it can’t be a substitute for human interaction. An important element of increased augmented reality is the social issues that may come with it. Lee acknowledges that virtual and augmented reality can create problems in the real world.

“When people become more and more dependent on technology, then there’s always that worry that people will ignore real people because they are too deep into the digital world.”

The goal is simple: she wants people to use her technology, and understand how science and art can be combined in ways that help people. She wants people to see how the benefits of technology, and the benefits of art can be combined together in helpful ways, like teaching new languages.

Amid all the technological and virtual reality components of her work, Lee always makes people her main study subject and focus. She counts one of her favourite memories as a moment 25 years ago, when she created an age-progressed image for a family whose son had died in early childhood, by using the boy’s photo, as well as photos of his parents.

“She later sent me an email and said that every family member was crying, which was so touching,” reflects Lee. “It didn’t take a lot of time for me, but it was still so rewarding for me to do.”